Introduction To Cloudflare Workers

Janne Kemppainen |I’ve been interested in serverless for a while but haven’t had the chance to try anything out. Recently I found out about Cloudflare Workers, a rather new serverless solution by the CDN company that powers 10% of the Internet. In this post I’ll tell you how it differs from its main competitors and how to get started.

I’m not being paid by Cloudflare to write this post.

Traditional Functions as a Service

The serverless space was pioneered with AWS Lambda which was released in 2014. The idea was to transform the pricing model to pay per actual usage. AWS Lambda is a Function as a Service (FaaS) platform which takes away the need to manage virtual machines and only worry about the code itself.

With virtual machines you need to provision some amount of machines beforehand and scale up and down depending on the load. You’d still need to pay for the resources even if nobody was actually using them as you can’t just shut everything down when there are no users.

The value proposition of AWS Lambda was that you’d need to pay only for the actual requests and computing time. You could therefore deploy multiple functions online without paying anything until they were actually used.

The implementation of Lambda functions is based on containers. Each function runs in a container and the amount of containers can be adjusted based on the amount of requests. The system handles scaling automatically for you.

Lambda functions can be written in Node.js, Python, Java, Ruby, C#, Go and PowerShell. You can also use the Lambda runtime interface to add support for other languages.

AWS Lambda has some drawbacks too. As it is not feasible to keep all function containers running all the time AWS will automatically shut down the those that haven’t received messages in a while. Therefore it can take a bit longer to get a response from a function that has gone to sleep.

When the demand incrases Lambda needs to spin up more containers to handle the load. In the worst case the startup latency can be multiple seconds. These are called cold starts.

Google has its own serverless offering too: Google Cloud Functions. At the moment the supported languages are Node.js, Python and Go. The third major option is Microsoft Azure Functions.

All these alternatives are quite similar in the end with some differences in pricing models and the available API’s.

This site is hosted on Netflify which is a service for hosting static websites. They also have a FaaS offering with Netlify Functions which uses AWS Lambda under the hood. Their idea is to make it easier to add dynamic behavior to static websites without having to manage the cloud environment.

About Workers

While traditional serverless functions run on containers the team behind Cloudflare Workers decided to take a different approach and run JavaScript functions in V8 isolates. This is the same technology that is used in the Google Chrome browser.

Isolates work kind of like tabs in a browser. When you’re browsing the web your tabs are also isolated from each other so some random site can’t fiddle with data from your banking application. This lets Cloudflare run untrusted code from multiple customers inside the same instance as the isolates aren’t allowed access to each other’s memory.

This means that they can cram more functions in the same space with less overhead from the system (compared to virtual machines or containers). Sharing the runtime also means that the memory overhead of individual functions is reduced, making it more affordable to host the service. Apparently, the functions are also fast to start as launching an isolate takes around 5 milliseconds. This is because the system doesn’t need to launch new containers and processes but it can use the existing V8 process.

This should be a significant improvement over the cold starts on other serverless platforms. Though, with constant usage the Lambda function containers should also stay alive.

The workers scripts are deployed to all Cloudflare data centers around the world within seconds. Therefore the users can be always served from the location that is closest to them. With other cloud providers you’d have to select the regions and availability zones where you want to deploy your functions which might not be optimal for all users.

You can read more about Workers from the Cloudflare blog.

Costs comparison

As I’m doing these cloud things just for fun and learning purposes at the moment the costs are a big factor to me. I’m not ready to pay lots and lots of money for pet projects. Luckily cloud functions are really affordable in general. The most limiting factor is that if you want to build something more complicated you’ll probably need a database which will typically cost something. Storage and traffic should also be considered when comparing costs.

Cloudflare Workers offers a very generous free tier whith 100,000 requests a day with 10ms of CPU time for each request. This doesn’t mean that the function must return in 10ms as only the time required for execution is counted. For example waiting for external services to respond doesn’t count towards the limit.

Here is a comparison of the free resources that you get with each cloud provider:

FaaS always free tier limits

| AWS Lambda | GCP Functions | Azure Functions | Cloudflare Workers | |

|---|---|---|---|---|

| Requests | 1M/month | 2M/month | 1M/month | 100,000/day |

| Compute | 400,000 GB-s | 400,000 GB-s 200,000 GHz-s | 400,000 GB-s | 10 ms/request |

| Outbound data | 1 GB | 5 GB | 5 GB | unlimited |

With the three major FaaS providers the first 1 to 2 million requests are free along with some amount of computation resources. Typically this is measured in gigabyte-seconds but Google is also measuring gigahertz-seconds separately.

The compute time required by the functions is typically rounded up to the nearest 100 milliseconds so by design users are overpaying for 50 ms on average.

Cloudflare only limits the amount of CPU time the function has available and does not use it as a basis for billing.

Like in their CDN service outbound data from Cloudflare Workers doesn’t cost anything. The other cloud providers have a small amount of free data each month after which you’ll have to pay per use.

Here are the costs after the free tiers:

FaaS normal pricing

| AWS Lambda | GCP Functions | Azure Functions | Cloudflare Workers | |

|---|---|---|---|---|

| 1M requests | $0.20 | $0.40 | $0.20 | $0.50 |

| Compute (GB-s) | $0.0000166667 | $0.0000025 | $0.000016 | 50 ms/request |

| Compute (GHz-S) | - | $0.0000100 | - | - |

| Inbound data | free | free | free | free |

| Outbound data | $0.09/GB | $0.12/GB | $0.087/GB | free |

| Storage | $0.023/GB | $0.026/GB | $0.0184/GB | $0.50/GB (KV) |

| API gateway | $3.50/1M | $3.00/1M | $0.21/h | - |

As you can see Workers has a higher price per million requests but on the other hand compute resources are not billed separately. The paid Workers plans raise the compute time limit to 50 milliseconds per request.

The paid plan which starts from $5/month which gives you 10 million Workers requests. Additional requests are billed at the $0.50/million rate. You also get 1 GB of stored data, 10 million reads and 1 million write, list and delete operations for the the KV storage. Each additional stored gigabyte or a million reads is also $0.50, and additional write, list and delete operations cost $5 per million.

Storage in Workers is threfore more expensive than in other cloud providers but it is primarily meant for key-value data. To serve larger files you should probably use a cloud service such as Amazon’s S3 buckets.

Performance

These performance comparisons have been taken from https://serverless-benchmark.com/. It seems that Cloudflare Workers has the lowest overall overhead of the FaaS solutions with AWS Lambda taking the second place. According to this blog post by the benchmark author the overhead is calculated by the time it takes from request to first byte minus the function execution duration.

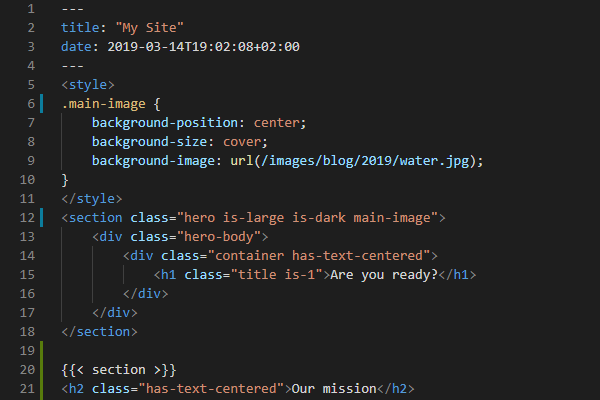

Serverless benchmark overhead with 50 concurrent requests

The first image shows the results for 50 concurrent requests where the next request is executed as soon as a response has been received in an attempt to keep the concurrency constant.

I find the numbers really interesting. Especially the comparatively poor performance of Google Cloud Functions and Azure Functions. It feels weird that they would add on average hundreds of milliseconds of overhead to each request. And that doesn’t even count the actual function execution time!

So according to this it seems that Google and Microsoft are doing something wrong with higher loads. Maybe they can’t handle the burst of messages and scale up quickly enough.

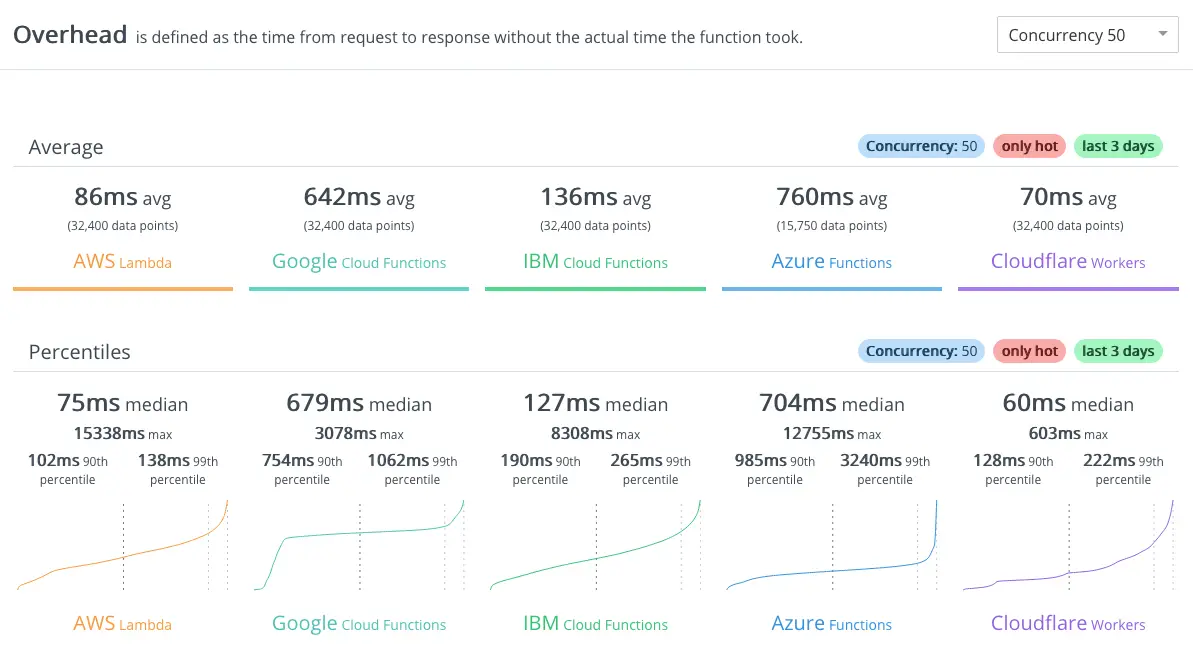

Serverless benchmark overhead with 1 concurrent request

When compared to just one concurrent request the response times look a lot different. Google Cloud Functions is now the clear winner with just 22 milliseconds average overhead.

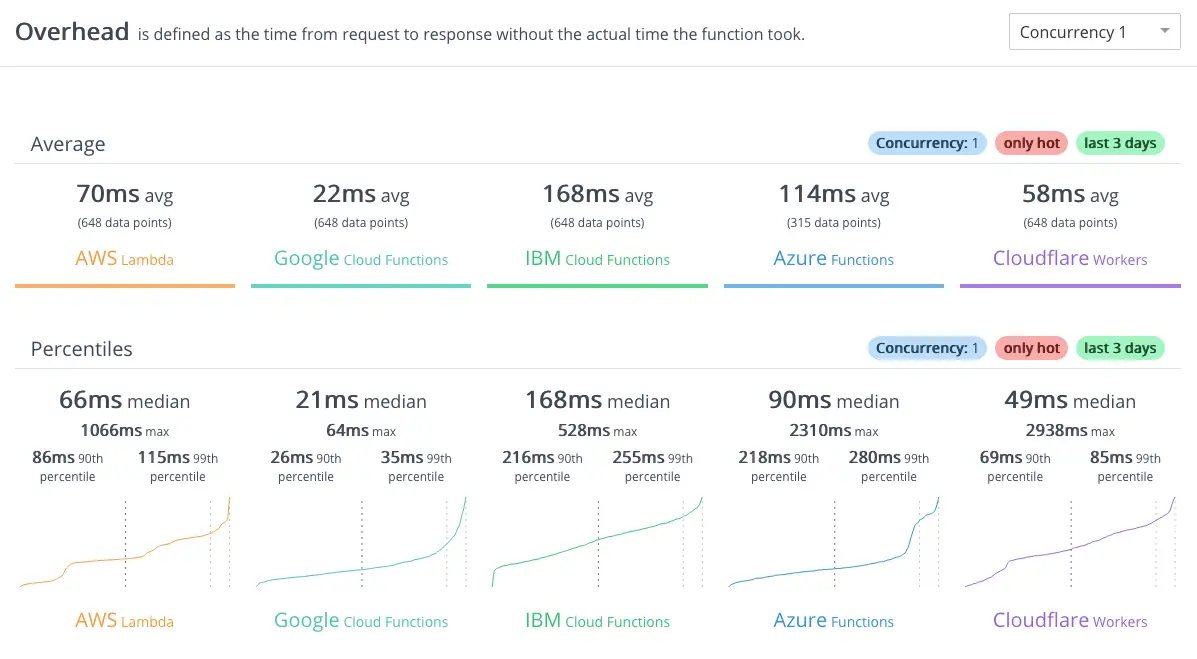

Coldstart performance

As already mentioned cold starts can happen when the functions are called infrequently. Workers wins here with close to no effect on the average response time. Google Cloud Functions also seems to achieve quite decent results.

The Lambda cold start takes over half a second but Azure functions has a horrible six second response time.

This comparison didn’t take into account the processing resources. A Cloudflare Worker has 128MB of memory available while the other FaaS providers let you select the amount of memory and CPU performance according to your needs. Also the limited CPU time that a Workers script is allotted can take some use cases out of the equation.

Getting started with Workers

First off, I want to start by saying that Workers has a comprehensive documentation so that should be the first place to look for more information. While it is possible to use WebAsssembly to support different programming languages here I will stick to JavaScript only.

Note that despite using V8 Workers doesn’t use Node.js. The available API’s are implemented by Cloudflare. However, NPM packages are supported.

Now that that is out of the way it’s time to start building. The easiest way to start is to first check the Workers Playground. It will give you an online editor where you can run some test code.

Here is a super simple dummy example for you to test on the playground.

addEventListener('fetch', event => {

event.respondWith(handleRequest(event.request))

})

async function handleRequest(request) {

return new Response(request.url)

}Copy and paste the code to the code editor field on the playground page and click the Update button. The URL that was given as the parameter to the worker will be printed in the preview area. How exciting, huh!?

Install local development tools

While tinkering with the playground is cool and all the real development should happen on your own machine. To get started you need to install some tools. I’m assuming that you have npm available, and if not then you should go ahead and install Node.js first.

Next, you’ll need to install Wrangler which is the command line tool that is used for managing Workers scripts. Use the following npm command:

>> npm i @cloudflare/wrangler -gCreate a new project

Creating a new Workers project is easy with Wrangler. For a typical JavaScript project all you need to do is to use the following command:

>> wrangler generate my-first-worker

⬇️ Installing cargo-generate...

🐑 Generating a new webpack worker project with name 'my-first-worker'...

🔧 Creating project called `my-first-worker`...

✨ Done! New project created /Users/janne/personal/workers/my-first-workerThe generate function has an optional parameter to include a template. This can speed up your initial development if there is a template that matches your use case. The currently available templates are listed here and you should also be able to create your own templates as they seem to be just GitHub repositories. Example:

>> wrangler generate my-templated-worker https://github.com/cloudflare/worker-template-routerYou can now navigate to the project directory.

>> cd my-first-workerCheck the contents of the directory:

>> ls

CODE_OF_CONDUCT.md README.md wrangler.toml

LICENSE_APACHE index.js

LICENSE_MIT package.jsonNow you’re ready to work on the script.

Write some code

The code for the worker should be placed in the index.js file. If you now open the file in your text editor it should look like this:

addEventListener('fetch', event => {

event.respondWith(handleRequest(event.request))

})

/**

* Fetch and log a request

* @param {Request} request

*/

async function handleRequest(request) {

return new Response('Hello worker!', { status: 200 })

}The file consists of two parts: registering an event listener, and defining the handler for incoming events. The first lines are basically always the same.

The handler is the place where the magic should happen. It takes the request object as a parameter and after processing it should return a Response object or a Promise<Response>.

Let’s create a super simple function that isn’t totally useless. It will tell you the country code of your IP address. Keep the event listener part as it is but change the handleRequest function to the following:

async function handleRequest(request) {

const countryCode = request.headers.get("cf-ipcountry");

return new Response('Your country code is: ' + countryCode, { status: 200 })

}I know this is an almost embarrassingly simple example but it is what it is 😄. Maybe a more advanced application could be to redirect to a country specific page based on the address. This is just the starting point for you.

Cloudflare makes it really easy to check the user’s country as they put the two letter country code to the cd-ipcountry header. Therefore all we need to do in the request handler is to read the header value and write it to the response.

Deploy to the cloud

To get your new function published the first thing you need to do is to create a Cloudflare account if you don’t already have one.

Next, create a workers.dev subdomain. When logged in to your Cloudflare dashboard you should see the option to get started with Workers.

Click here to get started with workers

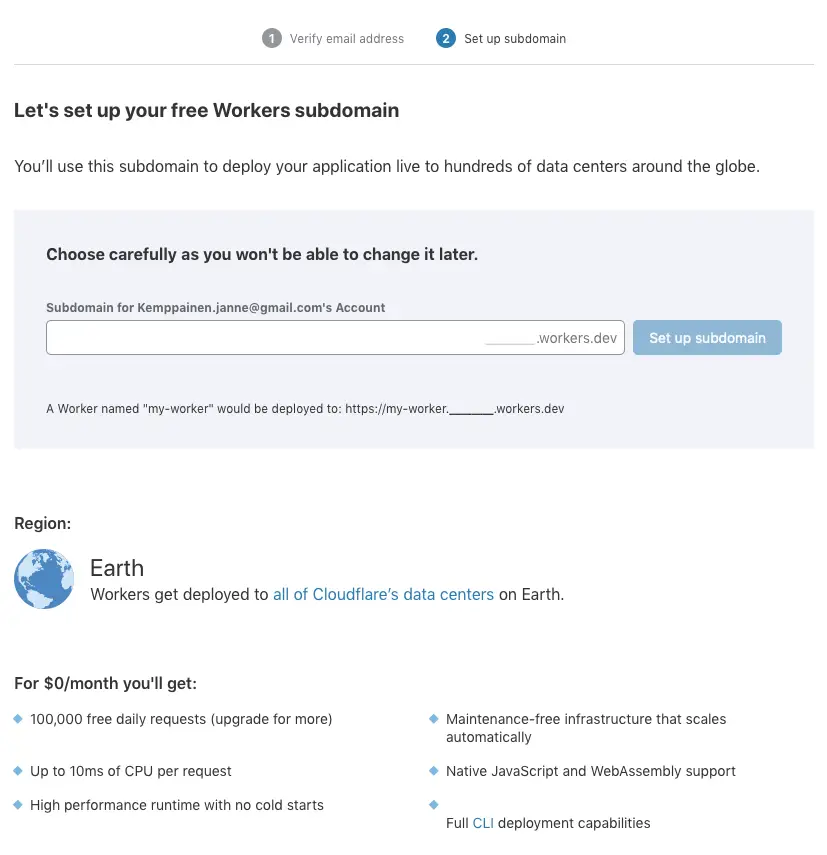

After verifying your e-mail you are asked to select a workers.dev subdomain. You won’t be able to change it later.

Configure your own subdomain

Now you’re ready to set up the Wrangler configurations. On the Cloudflare dashboard click Workers and you should see your Account ID on the right side of the page, under the API section. There you will also find the link to Get your API key. Use that link to go to your profile page and scroll down to the API Keys section to view your Global API Key. Copy the value and configure Wrangler:

>> wrangler config <email> <apikey>Next, edit the wrangler.toml file and fill in your account_id from the dashboard.

Now you can build the project and deploy a preview to the cloud:

>> wrangler build

>> wrangler previewWith the preview you can test that your code works before deploying it to production. When you are satisfied with the code you can publish it:

>> wrangler publish

⬇️ Installing wasm-pack...

⬇️ Installing wranglerjs...

✨ Built successfully.

🥳 Successfully published your script.

🥳 Successfully made your script available at my-first-worker.pakstech.workers.dev

✨ Success! Your worker was successfully published. ✨The worker is now available under your workers.dev subdomain. Go see and verify that it works. Try out some proxies to access the page from different countries.

You can manage your workers from your Cloudflare dashboard. Go ahead and delete the test worker that we just created, you probably don’t want to keep it around anyway.

Conclusion

Personally I find Workers to be an exciting alternative to other cloud platforms. They have a distinctly different take on the serverless business and maybe at some point we’ll see similar services from the others too. I think the true power of Workers comes when used in combination with the Cloudflare CDN service.

This was a short introduction and now you should hopefully have the tools to start creating your own awesome projects. Right now I’m trying to think of some ways that I could use Workers in this static blog. Any ideas are welcome.

Post your thoughts about Cloudflare Workers in the comments below or hit me up on Twitter @pakstech.

Discuss on Twitter

The idea of @Cloudflare Workers seemed so promising that I decided I should take a look. Wrote an introductory blog post as a result https://t.co/Am0HF82UyQ

— Janne Kemppainen (@pakstech) June 20, 2019

Previous post

Homebrew Options Removed